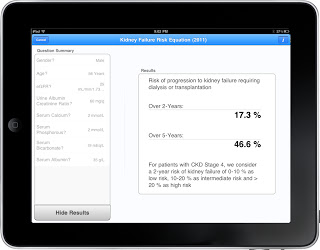

In something of a first for Nephrology, today sees the simultaneous release of a powerful tool for predicting ESRD risk in CKD patients at the World Congress of Nephrology, in JAMA and as a mobile application (free download; see screenshot). Whereas most research slowly permeates the medical community over months to years, this type of collaboration harnesses the power of modern technology to get people using this valuable information from the get-go.

The ‘Kidney Failure Risk Equation’, developed by researchers from Tufts, will be presented at the WCN in Vancouver this morning at 11am. It provides the 2 and 5 year probability of ESRD for patients with CKD Stage 3 to 5. The risk score includes the following variables: age, sex, estimated GFR, albuminuria, serum calcium, serum phosphate, serum bicarbonate, and serum albumin and had a c-statistic of 0.92. This is really remarkably high, as similar published models typically have values ~ 0.7 (0.5 is a coin-toss). The score was validated in two independent populations of CKD patients (n = 8,391, 57% men and 38% diabetes). ESRD occurred at a rate of 11% and 24% over a median follow up of 2 and 3 years respectively.

This has been a long time coming. We’ve often written on this site about the need to stratify risk in CKD patients, including promoting the idea of a renal stress test, as has been done so effectively in cardiology. Today is a welcome step forward, as this model could potentially illuminate patient-doctor dialogue, guide triage and management of nephrology referrals and the timing of dialysis access placement, and help properly structure kidney transplantation protocols. Time will tell its true impact, but as someone interested in using technology to advance nephrology, this is an exciting day.

Thanks for the comment.

I agree there could possibly be some circularity introduced if the model were used for planning cadaveric renal transplantation, where the outcome is determined by one's place on a list (i.e. the algorithm says patient x should go here on the list, which then becomes self-fulfilling).

I don't forsee this being an issue in the setting of live donor transplantation or dialysis initiation. These outcomes are ultimately determined by near-term clinical need rather than by "what the model says", and the algorithm's role here would probably be in access planning, or timing of txp work-up, referral etc. As clinical need will typically be determined due to the combined effects of the risk factors included in the prediction model, I don't think the outcome would be affected. Time will tell.

It will be interesting to see how this pans out – clearly the next step is to test its utility in the real world. If the score requires revision in the future because it has changed patient outcomes, I would view it as a sign of success rather than failure!

Would not the validity of this tool depend on "business as usual" treatment practices?

i.e, USING the tool alters treatment *decisions* in ways which *change* outcomes, thus invalidating the model on which the tool is based?